AWS Lambda: Comparing Golang and Python

Serverless functions are great for lightweight cloud architecture and rapid provisioning. However, sometimes serverless introduces additional complexity to the deployment process. I compare Python and Go with respect to the ease of deployment when setting up a simple data factory on AWS Lambda. The factory makes many HTTP requests, validates responses, and creates parquet files. Go shows to have advantages over Python due to the packaging system, cross-platform compilation, and native parquet implementation.

Serverless functions are a great way to simplify your cloud stack and quickly produce new functionality. Serverless contributes to scalability, transferability, and flexibility of the entire stack. For me as a data engineer, this means that I can produce applications that will be understood by clients and can adapt to evolving requirements.

It’s not all perfect, though. In a recent project, I noticed something interesting: deploying to AWS Lambda was particularly awkward in my favorite language, Python. While solving these issues, transferability and flexibility of the stack decreased more than I would have preferred. How could I transfer this stack to a colleague with less engineering experience?

Not much later, Go crossed my path. I quickly recognized that Go takes away many of the hurdles that Python had given me. This blog tries to answer the following questions:

- What are the differences in packaging and deployment between Python and Go?

- How can concurrent programming improve the lambda function’s effectivity?

I will try to answer these questions by going through the following topics:

- CI/CD: Platform compatibility

- AWS limits, concurrent requests and validation

- Writing parquet to S3

Case study

A bunch of IoT devices add data to a database, which is accessible via a REST API. The case study requires the extraction of many, large jsons from that REST API. The jsons contain some metadata and a very long list of values:

{

"datetime": "2020-01-27T07:07:27.744489",

"values": [2210, 13260, 26837, 30913, 46491, 95062, 4152, ...]

}

Each of the jsons needs to be collected in a separate HTTP request, so the number of HTTP requests is high. The responses need to be validated as soon as they come in.

For storage and further processing, the jsons are combined and stored in parquet files on AWS S3. This is in a tabular format that looks like this:

The parquet files contains a datetime column of strings and a values column containing lists filled with int64s.

Serverless functions

With serverless functions, you just upload and run your code, without worrying about setting up servers. Serverless functions such as AWS Lambda are a great way to create a scalable architecture with little engineering effort. The serverless concept makes it possible to isolate small pieces of architecture with clear and limited responsibilities. The low-level of complexity enables the developer to make incremental changes and keep up rapid development cycles.

CI/CD: Platform compatibility

Of course, a serverless function is not really serverless. Serverless just means that you don’t need to set up a server yourself. Lambda requires you to upload all the required packages, except for boto3. The Lambda host is an Amazon Linux machine and the packages you ship must therefore be Linux-compatible.

Deployment to Lambda requires three steps:

- Build and package

- Zip and upload to S3

- Tell the Lambda function to use the newly uploaded packages

Cross-platform compatibility is a problem for the C-libraries on which many Python packages rely. For example, you cannot simply package and upload the pandas package from Mac or Windows to AWS Lambda and expect it to run. The problem of cross-platform compatibility is especially present in the development stage. This is a relevant issue since development and experimentation should be quick and easy for all team members.

Below, we compare deployment for both languages. Both CI/CD cases are implemented in GitHub actions with action deployments to Lambda, you can find the workflows here: Go, Python. Terraform definitions are found here.

Python in AWS Lambda

So how do you create compatible Python packages? There are three options:

- Set up a Linux build server and build your package there.

- Download the wheels compiled for Linux and ship them.

- Package using the Lambci Docker image.

The last option is my favorite because it requires the least effort. Here is an example command that does this:

docker run --rm -v "$(pwd)":/foo -w /foo lambci/lambda:build-python3.7 pip install -r requirements.txt -t lambda-packaged/

Since this is a Linux Docker container, the installed Python packages are compatible. This will create a directory called lambda-packaged, which can be zipped and uploaded to S3.

Layers

A more realistic setup is to create a Lambda Layer, in which you can place the needed packages. The layer is loaded by the function when it is invoked. Packages are thus removed from the function itself. The advantage of this approach is that you’ll only update the layer when the requirements.txtchanges, which reduces building time (example). Layers may be used by multiple functions. Some dependency between the layer and the function is introduced, as the function needs to be explicitly updated when a new version of the layer is created. The building process is similar to the Lambci container, see the workflow for details.

When updating while using a layer, more steps need to be taken (YAML):

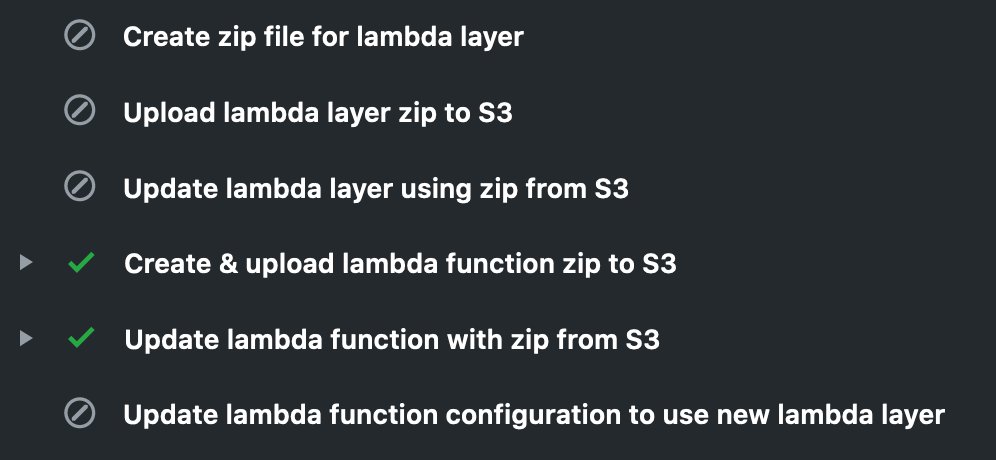

CI/CD pipeline that updates the lambda function. In this particular run, the layer was not updated, since the requirements.txt did not change. Corresponding YAML file here.

The pipeline clearly shows which steps are executed and which are skipped. In this case the lambda layer was not updated. Introduction of the lambda layer complicates the deployment, but significantly reduces building time.

Golang on AWS Lambda

In contrast to Python, Go compiles to machine code. This means that a Go executable natively runs on the host machine, unlike Python which needs an interpreter. A Go executable compiled for Mac will not run on AWS Lambda. But luckily it is easy to cross-compile Go code for Linux. Which is exactly what we need. The following line compiles requests.go for a Linux machine.

GOOS=linux GOARCH=amd64 go build requests.go

The resulting package can be uploaded to AWS Lambda right-away! The CI/CD pipeline looks like this:

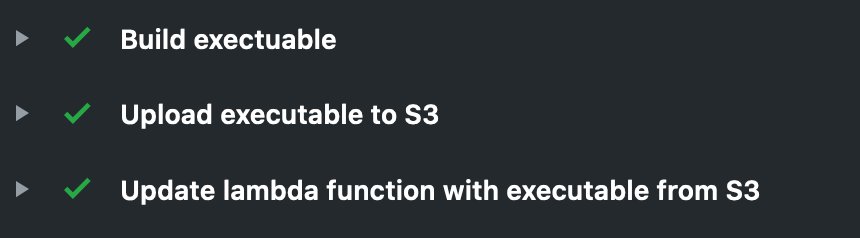

CI/CD pipeline tun to deploy the Go code to AWS Lambda. YAML is found here.

The number of steps is limited to a minimum and the dependency between the steps in straightforward. The YAML for this pipeline is found here.

CI/CD conclusions

Lambda functions require you to package and ship all your code and dependencies to AWS. Code needs to be Linux compatible with the AWS Linux distribution, which imposes some additional work if your development environment is not compatible. Python packing requires care when it comes to cross platform compatibility and the directory structure. Go allows for cross-platform compilation and static linking, making it easy to deploy new Lambda versions.

AWS limits, concurrent requests and validation

To obtain the jsons, a large number of HTTP requests need to be made to the REST API (e.g. >10k per hour). Although Lambdas are cheap to invoke, any solution involving this number of invokes will be unnecessarily expensive. This can be solved by making many HTTP requests in a single Lambda invocation. But there are limitations to this:

- Lambda has a max duration of 15 minutes. So, the code cannot run longer than that period of time.

- The maximum memory use of 3GB cannot be exceeded.

To keep runtime and memory under control, HTTP requests must be made concurrently, but not by spawning many processes since memory limits could be exceeded. The answer is to use concurrent HTTP requests: Python AsyncIO or Go’s goroutines.

For each particular use case it must be assured that the responses are not too large for this memory. For my use case, I checked that it would never exceed 2GB. Otherwise, a solution with multiple lambda invocations must be constructed.

Python

In Python 3.5, AsyncIO is included in the standard library to introduce asynchronous programming. For us, this means that the next HTTP request can be made while another function is waiting for an HTTP response. Asynchronous HTTP requests can be made with the aiohttp package. When using aiohttp, the Python code to make the HTTP requests may look like this:

Python code to make multiple HTTP requests using aiohttp.

The await keyword indicates statements that will take time and allows Python to start other asynchronous functions. The async keyword indicates that the function will contain an awaitable statement.

The use of async/await may be overwhelming when you see it for the first time, but in this case, it is really worth the effort. An introduction to AsyncIO can be found here.

Go

Similar concurrent code can be written in Go. The built-in goroutines make it easy to send HTTP requests concurrently:

Go snippet to make 100 HTTP requests concurrently. The waitgroup ensures no goroutine is forgotten.

The Go code contains two ingredients to make concurrent requests: the go keyword and the WaitGroup. The go keyword indicates that other Get functions can be started while the Get’s are waiting for the HTTP response. The WaitGroup is just a counter that keeps track of the number of Get functions that are still waiting for a response.

Asynchrony conclusions

Lambda puts memory and runtime constraints on our code. Since the code mostly exists of waiting for the REST API, concurrency can be used to make a large number of HTTP requests from one Lambda invocation. Both languages have built-in functionality to achieve concurrent HTTP requests. Python offers AsyncIO to do this, while Go has built-in goroutines that can do the job.

Deserializing the response

Deserializing the response validates the content and is essential when calling external resources. Deserialization requires a data model of the json that we expect and implementing the data model can be tedious work. Let’s look at the most straightforward ways of validating HTTP responses with Python and Go.

The goal is to model a very simple json:

{

"datetime": "2020-01-27T07:07:27.744489",

"values": [2210, 13260, 26837, 30913, 46491, 95062, 41528, ...]

}

Python

Although sophisticated json validators exist (example, example), passing the json into a dataclass is a simple way to check the presence of keys. Dataclasses avoid writing the boilerplate __init__() method when a class’ only responsibility is to hold data. The code below creates a dataclass that has a datetime field which is typed as a string and a values field that holds a list of integers. Note that the type hints are not used at runtime but are used to check type consistency before running. The __post_init__ checks values at runtime.

Python: Dataclass to deserialize the example json. The __post_init__ is called after the initialization to cast types where needed.

Although the __post_init__ method gives some validation, it is implemented manually.

For our example, the dataclass would be used to deserialize the json like this:

Deserializing a json into a dataclass named Data.

Go

Building a data model in Go can be done using structs. The struct named Data (see below) works similar to the Python dataclass above. One advantage is that we don’t need to create a __post_init__ method since Go is statically-typed and gives an error if the value does not match the expected type. The `json:` tag adds name conversion when the json keys are named differently than the struct fields. The json is decoded at line 7 into a Data instance.

Note that building the data model using structs will be essential to create parquets, and tags for parquet creation are already present in the struct.

Go: Data struct and deserialization of the json. The stuct is essential in creating parquet files.

That’s all we need to do for a simple validation of the json in Go.

Conclusion deserialization

Go structs with the json package can be used to deserialize validated jsons. Python has dataclasses that can validate and deserialize jsons. Since Python is a dynamically-typed language, runtime validation has to be done manually. For Go, having the correct types is a necessity and requires no additional code.

Writing parquet to S3

Parquet is the go-to format for data science and having a good way to create them is essential for our Lambda function. Both Python and Go implementations can deliver a pandas-compatible parquet file.

For the case study the goal is to produce a tabular format which looks like this:

The parquet files have a datetime column of strings and a values column containing lists filled with int64s.

Python

Converting a list of Data instances to parquet requires the following actions:

- Convert the list of

Datainstances to a list of datetimes and values. - Create parquet columns and assign the correct parquet format to the column.

- Create a pyarrow table, convert to a pandas dataframe and convert to parquet before writing to S3.

Python: convert the deserialized json to parquet for storage on S3. The to_pandas() step requires pandas to be installed, and restricts you to version pyarrow version 0.12.1.

One of the main drawbacks of creating parquet files with Python is the size of the pandas and pyarrow packages. Deploying a Lambda with aiohttp and pyarrow yields a zip of 135MB including dependencies. If we want to add pandas as well a problem occurs: Lambda does not allow deployments larger than 250MB. The current solution is to downgrade pyarrow to version 0.12.1. Another solution is to exclude pandas if you can miss it, or use fastparquet. Still, Python packages can bring you to the deployment limit for Lambda.

Another issue with the current example is that the pa.string and pa.int64 are hardcoded. This is not sustainable for more complex responses, and some hacky-code must be written to avoid code duplication.

Go

Creating parquets in Go with the parquet-go package is relatively new and under development. The package is implemented purely in Go. To implement the package in our api calling example, the following needs to be done:

- Create a data model using structs

- Annotate the struct fields with the parquet supported types

- Create

ParquetFileandS3FileWriterinstances - Write each

Datainstance to theParquetFilein-memory - Close the

ParquetFileand theS3FileWriter

An advantage of the Go parquet package is that we can pass the Data instances straight to the parquet writer. The Parquetwriter then uses the struct tags to serialize the instance to parquet. This is great, since the struct acts a single point of truth for json, parquet, and Go representations of the data coming from the api. The code below gives the high-level steps, a complete implementation is found in the full example.

Go: high-level steps when writing a parquet file. For implementation of the parquet function, see the full example.

Since this blog compares Go and Python on AWS Lambda, the Go code should write pyarrow/pandas-compatible parquets. It is particularly important to check compatibility of the parquets and make sure that the types match. In the current example, the values column must be pyarrow compatible. Use type=List instead of type=Repetitiontype to indicate a true columnar format (as recommended in the readme):

Go: Parquet tag to create pyarrow compliant column filled with lists.

When setting up the example, I encountered several hurdles trying to find the correct tags and understand the error messages. As the parquet-go package develops and gets more users, I expect more documentation and blogs to appear (like this one).

Parquet conclusion

Creating parquet files on Lambda with Python and Go are quite different cases. For Python no pure-Python parquet implementation exists. A Lambda deployment with pyarrow (0.15.1) and pandas currently exceeds the limits of a Lambda deployment (250MB). To stay below this limit the old pyarrow version 0.12.1 needs to be used.

Go benefits from a pure Go package to create parquet files: parquet-go. For the minimal example shown here, this creates an executable of ~20MB, which is easily shippable to AWS. The struct-based data model creates a single point of truth, but filling up the Parquetfile instance and writing the file requires some more attention compared to Python.

Conclusion

Serverless functions like AWS Lambda are great for lightweight cloud architecture. Though we should be careful not to introduce complexity in our CI/CD and Terraform code, which may outweigh the advantages of Lambda.

Here, I compared the deployment of a small data factory to AWS Lambda for Python and Go. The datafactory needs to make a large number of concurrent HTTP requests and combine them in parquet files for further downstream processing.

Deployment of Python and Go prove to be different experiences. Since Lambda requires all the packages to be uploaded, Python solutions have the disadvantage of large packages. Using Lambda layers is a way of reducing build/upload time, but imposes complexity to the building process and the Terraform code. Also uploads may exceed the Lambda limits for deployment size and the package versions need to be chosen carefully. Go has an edge over Python here, since static linking creates a single executable which is then uploaded to Lambda. Since Go only compiles the needed files, the executable tends to be much smaller. Furthermore, Go can be cross-platform compiled, making Lambda development from Windows or Mac easier.

Concurrency can be leveraged to keep the memory footprint small and runtime short. Both are important within Lambda runtime and memory limits. Python’s new Asyncio and Go’s goroutines are an excellent way to get the most out of a single Lambda invocation.